World-class universities, light-touch regulation and a history of technological innovation are all regularly cited as reasons for multinational companies to base facilities in the UK.

The quality of UK’s weather does not usually come to mind as a factor when deciding on the Midlands over, say, Silicon Valley. But according to UK self-driving body ZENZIC, whose job it is to build the market for connected and autonomous vehicles (CAVs), the nation’s changeable weather conditions single it out.

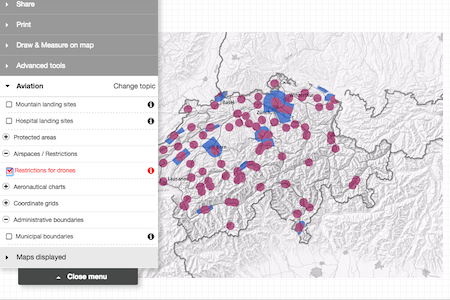

“Testing in the UK means you can expect a variety of conditions, from rain to sunshine to hail – sometimes within a day,” its online brochure for the Testbed UK facilities states. Testbed UK is the name for a network of testing sites for autonomous vehicles based across the Midlands and the South East of England.

In September, the latest addition to the network was opened at the Millbrook proving ground: a 6,300 sq ft high tech “autonomous village” designed to support the testing and development of CAVs by simulating an urban environment. The facilities were partly funded by ZENZIC and the Centre for Connected and Autonomous Vehicles (CCAV) and opened by George Freeman, a government minister in charge of “The Future of Transport”.

A report published by the Society of Motor Manufacturers and Traders and consultants Frost & Sullivan in April claimed the UK was in pole position in the global race to become a world leader in CAV, which it said could inject £62bn into the economy by 2030. It cited supportive regulation as a key driver for the development of the CAV sector, along with an enabling infrastructure and a strong market. However, it did also warn that this was dependent on the UK securing a favourable Brexit deal with the EU.

In October the Law Commission of England and Wales and the Scottish Law Commission launched their latest consultation round on the regulation of driverless cars in which they argued that a unified regulatory regime was needed to replace the current system, which sees different types of services regulated by different sets of rules.

The move was welcomed by Ben Gardner, a specialist in driverless car law at Pinsent Masons, as “another positive sign for the UK’s position as a global leader in automated vehicle technologies”.

The consultation is part of a range of regulatory and legal milestones detailed in a roadmap published by ZENZIC in September that sets out “to ensure the UK has a world class and mature legal and regulatory framework that enables CAM to be deployed at scale”.

The spur for this project is a new industrial strategy launched by Theresa May’s Conservative government in 2018 that established four “grand challenges” for the UK, one of which is for it to become “a world leader in shaping the future of mobility”.

Interventionist strategy

The strategy – somewhat overshadowed by the UK’s Brexit travails – heralded a shift towards a more interventionist industrial policy by the government from the free market approach, which was pioneered by the then UK prime minister Margaret Thatcher in the 1980s.

It draws on the work of Mariana Mazzucato, professor of economics at University College London and director of the Institute for Innovation and Public Purpose, who advocates the benefits of the public and private sector working together to complete clearly defined and ambitious missions.

She cites US President John F Kennedy’s pledge to achieve a moon landing by the end of the 1960s as a successful example of this approach.

The industrial strategy has its critics, notably for its focus on the most successful UK sectors, as opposed to those where productivity is lagging such as retail and hospitality. There are also question marks over the level of public money being put behind the strategy when compared to the scale of the stated ambitions, although the spending splurge promised by both the Labour and Conservative parties in the run up to the UK’s December national elections may change that.

But the good news for technology lawyers is that advanced technologies feature heavily in all four grand challenges, the others being: clean growth, harnessing innovation to cope with an ageing society and, most notably, putting the UK at the forefront of the AI and data revolution.

The UK AI “deal” featured a £603m funding package for AI and up to £324m from existing government funding.

AI policy

To oversee this programme, the government set up three new bodies: the AI Council, which is made up of leading business figures and academics with a brief to boost growth of AI and promote its adoption and ethical use; a government Office for Artificial Intelligence; and the Centre for Data Ethics and Innovation, which is tasked “to connect policymakers, industry, civil society, and the public to develop the right governance regime for data-driven technologies”.

Just as is the case with the CAV market, commentators and policy makers have stressed the need for the development of new laws and regulations to underpin the use of AI.

“To operate in an efficient and secure manner, business and markets need the certainty of law and regulation,” Macfarlanes partners Richard Burrows and Alex Edmondson observed, in a legal briefing. “If Britain is to be a world leader in AI technology, parliament and the courts will have to provide that certainty and do so now. Analogue law needs to evolve far more quickly to maintain relevance in our new digital world.”

The pair highlighted the minimisation of bias in AI decision making, the allocation of liability when AI causes harm and the impact of AI on workforces and supply chains as among key areas to be addressed.

The debate over whether AI needs its own set of rules or can be incorporated into the existing regulatory frameworks is ongoing – a proposal by the European Parliament for AI to be treated as electronic persons and therefore be held liable for damages was rejected in a letter to the European Commission signed by more than 150 AI experts, including academics and business leaders.

In the meantime, the UK’s regulators – in common with regulators across the world – are already grappling with the challenges associated with this technology.

Black box problem

AI’s so-called 'black box problem' – the inability to explain how decisions are made – is among the challenges associated with AI that are currently being investigated by the UK’s Information Commissioner’s Office (ICO), whose job it is to enforce the EU’s GDPR rules in the UK.

Given the recent controversies around the collection and use of personal data by the likes of Facebook and Google, the ICO will be a key UK player in the development of an effective regulatory regime for

AI.

It is currently consulting on the development of an auditing framework for AI in a process that has involved citizen’s juries and roundtables with industry groups.

“When adopting AI applications, data controllers will have to assess whether their existing governance and risk management practices remain fit for purpose,” ICO AI research fellow Reuben Binns and technology policy adviser Valeria Gallo wrote in a blog post published on 26 March. “This is because AI applications can exacerbate existing data protection risks, introduce new ones, or generally make risks more difficult to spot or manage. At the same time, detriment to data subjects may increase due to the speed and scale of AI applications.”

One area for investigation is 'automation bias' – the danger that humans can become overly reliant on recommendations generated by AI to the extent that they don’t question them sufficiently and, if they do, are reluctant to flag concerns due to the pervading corporate culture.

The ICO’s stated goal is to help companies design compliance into their AI systems at the development stage so unanticipated problems do not emerge later.

In June, Binns and Gallo told a Society for Computers and Law (SCL) podcast they were keen to engage with the legal profession as part of the consultation process. “Our role isn’t to stop innovation, but we do need to ensure it doesn’t come at the expense of data subjects’ fundamental rights and freedoms,” said Gallo.

Cryptoassets

Another key UK regulator tackling the challenges associated with AI and other advanced technologies is the Financial Conduct Authority (FCA). In August, it issued a set of guidelines to help businesses conducting cryptoasset activities comply with the UK’s financial services regulations that were broadly welcomed by the legal community.

In a briefing, Pinsent masons partner Rory Copeland said the guidance diverged from the regulatory approach adopted by the European Banking Authority by “repositioning the cryptoasset taxonomy” and opening up the way for more innovation in blockchain cross-border settlement systems. “Many banks, settlement associations and enterprise blockchains will be elated at the guidance that the use of exchange tokens as a remittance vehicle does not bring them within the regulatory perimeter,” he observed.

The FCA has also won praise for its oversight of the roll out of open banking in the UK, which is regarded as having ensured the City of London remains a world leader in fintech innovation. Notably, in 2016 it launched its much-imitated sandbox scheme, which provides selected start-ups with a safe space in which to test their products in the market.

Technology, privacy and financial services lawyer Mark Sherwood-Edwards, who hosts the Fintech Legal podcast, said, “I think the FCA is globally perceived as innovative and the sandbox is a good example of that. It knows not to go in too early, allowing ideas to bubble away before it intervenes and decides whether they are a plant or a weed.”

Brexit

Proponents of Brexit argue that the UK’s departure from the EU – if indeed it does take place – will allow regulators to be even more creative. The Conservative election manifesto unsurprisingly singles out EU regulations as a barrier to innovation.

But what do the lawyers think? In the latest SCL barometer survey of its members, 20 per cent of the respondents said it would have an adverse impact on their work, while 30 per cent disagreed. Fifty per cent were unsure. However, this uncertainty doesn’t prevent the respondents from being bullish about their business prospects, with sixty per cent expecting their tech law work to increase and just twenty four per cent anticipating a decline.

The swift adoption of AI and other advanced technologies in the UK, especially within its burgeoning financial services sector but also in other areas where the country enjoys an edge such as pharmaceuticals and the emerging CAV market, may be creating headaches for regulators but it is also generating work for lawyers.

And the next time tech lawyers are forced indoors by wet and windy British weekend weather they can console themselves with the thought that they are the perfect conditions in which to test autonomous vehicles.

.jpg)

.jpg)

.jpg)

.jpg)